Dental Suction Robot

Imitation-learning robot that holds a dental suction tube, replacing assistants.

Overview

Canada is short roughly 5,000 dental assistants. Clinics are understaffed, dentists are burning out, and patients in rural and Indigenous communities are waiting over a month just to be seen. Clinics can't function efficiently without assistants present.

I visited local dental clinics and talked to dental students to understand the issue better, and I kept hearing the same thing: dentists love working with assistants, but a large amount of what they do during proceedures is very routine (like holding suction tubes, repositioning tools, etc).

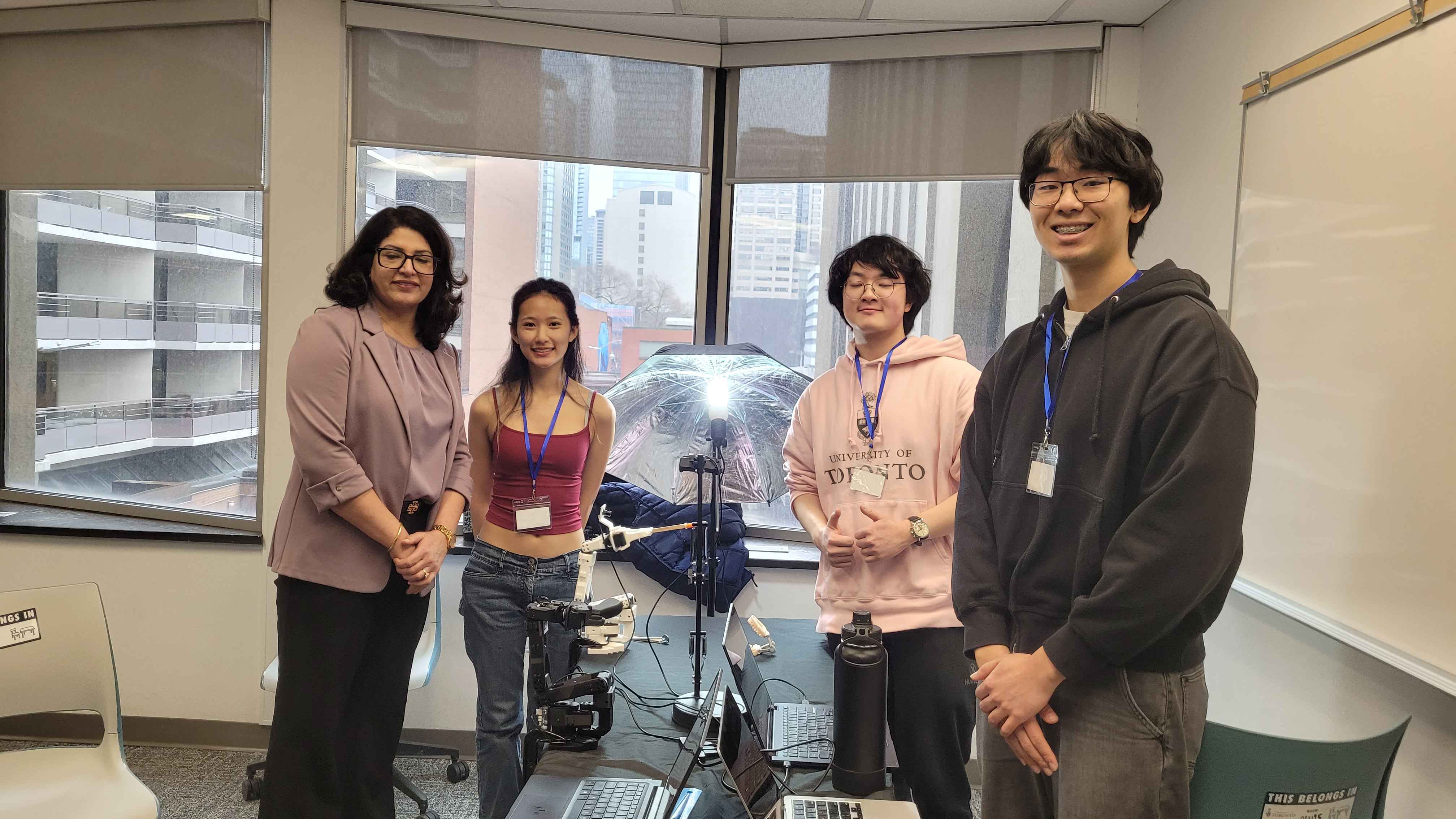

So for SmileHacks 2026, we built a robot that could act like a dental assistant's 3rd arm to help increase efficiency in dental clinics by automating these repetitive tasks.

How It Works

We used the SO101 robotic arm from LeRobot as the prototype for our dental suction robot. We teleoperated the robot to perform suction tube management tasks while recording synchronized video and motor action data into a LeRobotDataset. Then we ran imitation learning using a Diffusion Policy to train the robot to mimic the timing and positioning of the human operator autonomously.

Finally, the trained robot gets deployed in a real clinic environment for validation.

Results

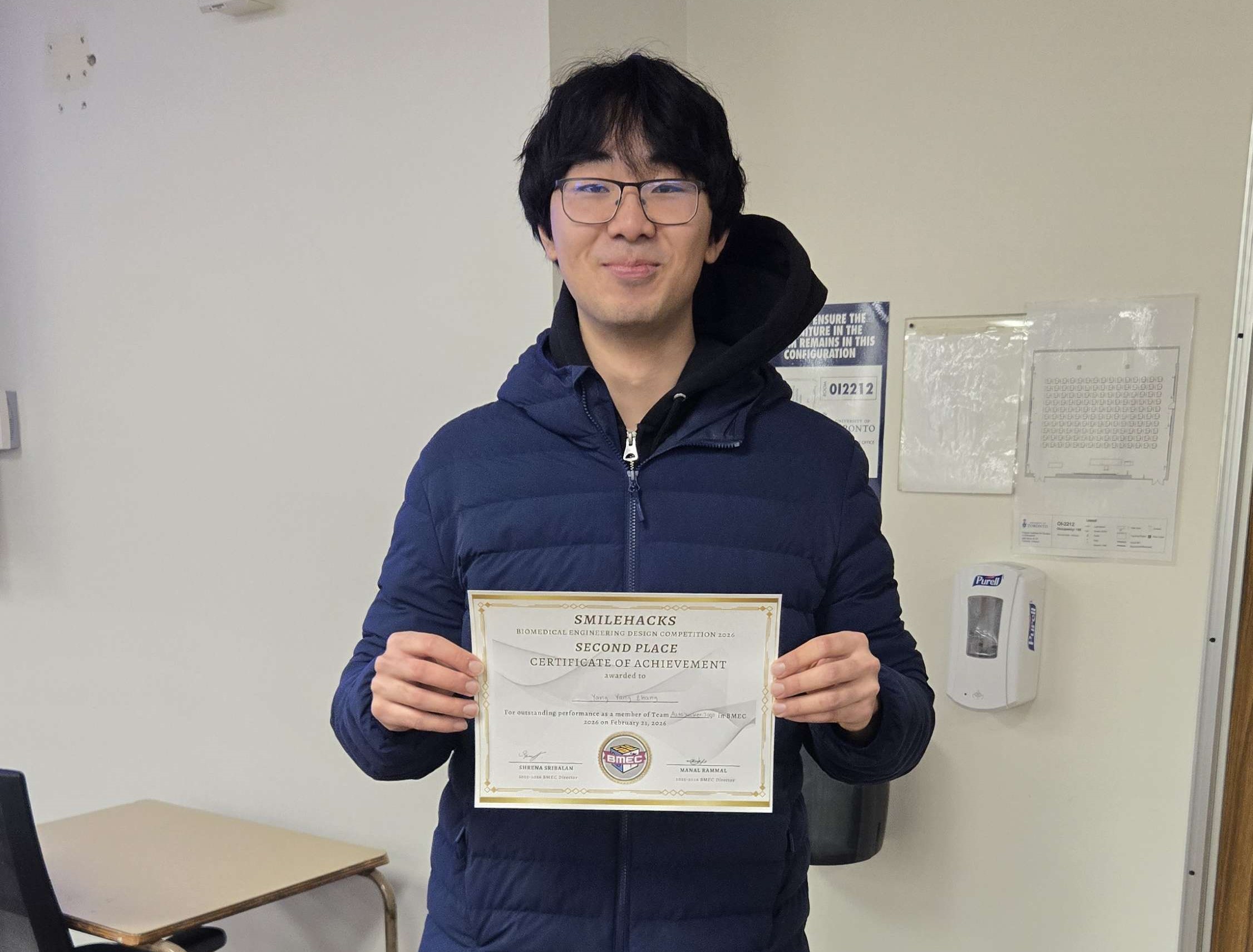

We placed 2nd at SmileHacks 2026 and won $400 and a guaranteed interview with the UofT Hatchery NEST program. During the interview we learned a lot about the VC space and how these investors choose companies and people to invest it.

Honestly, really proud of what we built in under 24 hours. The problem is real, the tech is real, and the path to deployment is clearer than we expected going in. This one's not done yet.