WinterStream AI

AI-powered commentary and analysis for watching the Olympics. Won Best Use of Gemini at UTRA Hacks 2026.

Overview

With the 2026 Winter Olympics approaching, we wanted to rethink how people experience live sports. A lot of viewers don't fully understand the rules or context of what they're watching. And for people with vision impairments, following a live event can be even harder. There's no easy way to ask "wait, what just happened?" and get a real answer.

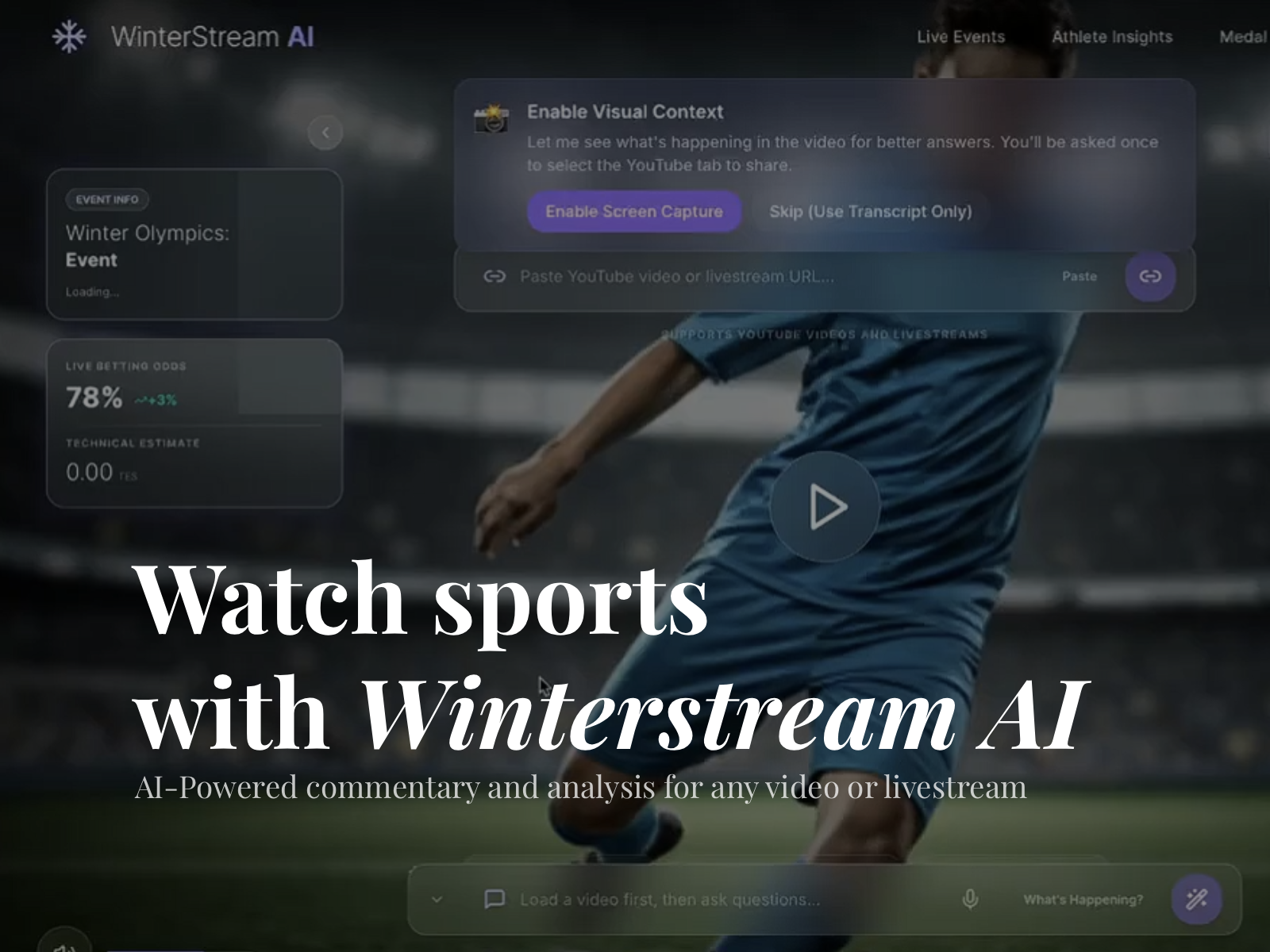

WinterStream AI fixes that. It's an accessibility-first, audio-centric Olympics companion that watches the stream with you, narrates what's happening, and answers your questions out loud in real time.

How It Works

WinterStream AI embeds any YouTube video or livestream and automatically fetches its transcript for context. Users can ask questions about what's happening by typing or saying "Hey Winter" and get answers back as natural spoken audio via ElevenLabs text-to-speech. You can ask "What just happened?" or "Why was that a penalty?" and get a clear, contextual answer immediately.

On the backend, FastAPI mediates between the frontend and the AI layer, with WebSockets handling real-time messaging so the back-and-forth feels fluid and conversational. Google Gemini powers the question-answering, taking both the video transcript and user query as context to generate accurate, descriptive responses that are carefully worded to avoid visual language like "look at this" or "you can see here."

The UI is built screen-reader friendly from the ground up, with minimal reliance on visual cues. The whole experience is designed to work just as well with your eyes closed.

I think this project could be extended further to livestreams and videos of all types, not just the Winter Olympics. For example, an avid NBA fan could ask questions about the live statistics of a match without having to leave the stream or open another app. Or a student could ask questions about a documentary they're watching for class. The possibilities are endless.

Results

Won MLH Best Use of Google Gemini at UTRA Hacks 2026. (software win at a hardware hackathon lmao. there was 2 streams, hardware and software, but our robot failed because the organizers ran out of their 3d printed wheel axles and all of ours broke 😭)